I have tried to identify where the problem could be coming from.

I found that the parameter_processors are only used in the function get_parameter_processors of the file edx/xblock-lti-consumer/lti-consumer/lti_xblock.py. The function get_parameter_processors checks the value of of XBLOCK_SETTINGS["lti_consumer"]["parameter_processors"] which in my case is:

['tahoe_lti.processors:basic_user_info',

'tahoe_lti.processors:personal_user_info',

'tahoe_lti.processors:cohort_info', 'tahoe_lti.processors:team_info']

The function then identifies the python modules (characters before the symbol “:” e.g., tahoe_lti.processors) and the functions to import from these modules (characters after the symbol “:” e.g., basic_user_info). Here is the code (quite simple):

def get_parameter_processors(self):

"""

Read the parameter processor functions from the settings and return their functions.

"""

if not self.enable_processors:

return

try:

for path in self.get_settings().get('parameter_processors', []):

module_path, func_name = path.split(':', 1)

module = import_module(module_path)

yield getattr(module, func_name)

except Exception:

log.exception('Something went wrong in reading the LTI XBlock configuration.')

raise

All the functions of the tahoe_lti.processors module (such as basic_user_info, etc…) just return a dictionary mapping keys (strings) to values (strings). If my understanding is correct, the idea is that all these key-value pairs should be send by edX to the LTI Tool. So get_parameter_processors only returns a generator of functions that return dictionaries mapping strings to other strings.

On my Tutor installation, the file lti_xblock.py can be found on 8 different paths:

tutor_local_cms_1:/openedx/venv/lib64/python3.8/site-packages/lti_consumer/

tutor_local_lms_1:/openedx/venv/lib64/python3.8/site-packages/lti_consumer/

tutor_local_cms_1:/openedx/venv/lib/python3.8/site-packages/lti_consumer/

tutor_local_lms_1:/openedx/venv/lib/python3.8/site-packages/lti_consumer/

tutor_local_lms-worker_1:/openedx/venv/lib64/python3.8/site-packages/lti_consumer/

tutor_local_lms-worker_1:/openedx/venv/lib/python3.8/site-packages/lti_consumer/

tutor_local_cms-worker_1:/openedx/venv/lib64/python3.8/site-packages/lti_consumer/

tutor_local_cms-worker_1:/openedx/venv/lib/python3.8/site-packages/lti_consumer/

According to the diff command, all the files are exactly the same.

I then tried to modify the get_parameter_processors of all 8 files as follows:

def get_parameter_processors(self):

"""

Read the parameter processor functions from the settings and return their functions.

"""

def test(xblock):

return {'ronan': 'blabla', 'test': 'ronan'}

for i in range(1):

yield test

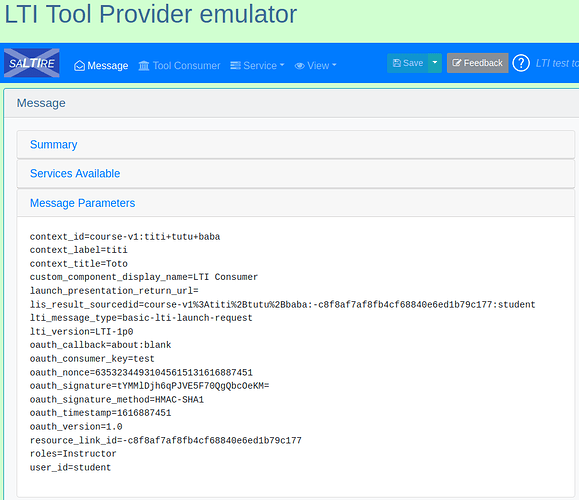

With this new code I was expecting edX to send the key-value pairs ronan=blabla and test=ronan independently of XBLOCK_SETTINGS. But when I check the LTI Tool Provider Emulator none of these parameters are sent (see picture below) even after stopping and restarting Tutor (my changes are still there after restart though). I also tried with {'lis_person_full_name': 'ronan'} instead of {'ronan': 'blabla', 'test': 'ronan'} (just to be sure that unexpected keys are not filtered later in the code, ‘lis_person_full_name’ is part of the LTI nomenclature).

@regis are there other files I should modify for my changes to be taken into account? if not, do you have any idea why my modifications seem to have no effect?