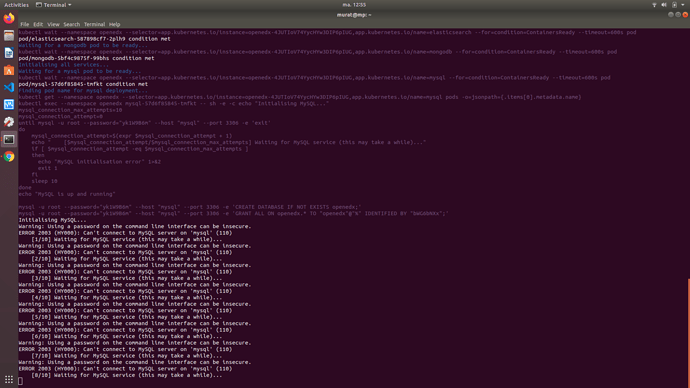

Can confirm this issue, running tutor k8s quickstart (with all default settings, minio plugin installed and 6GB memory for minikube (4GB didn’t work), minikube ingress addon enabled, minikube v1.7.2, Kubernetes v1.17.2).

It seems to be related to this issue: Pod unable to reach itself through a service (unless --cni=true is set) · Issue #1568 · kubernetes/minikube · GitHub which causes pods in minikube to be unable to reach “themselves” through the service cluster IP.

This would have been mitigated if the mysql migration was either run directly against localhost (which would work if you exec inside the mysql server container) or if it was run from a separate pod.

This workaround from the thread worked for me:

minikube ssh

sudo ip link set docker0 promisc on

This got me one step further. However, I’m still unable to successfully deploy to minikube, as one of the migrations failed:

Running migrations:

Applying certificates.0003_data__default_modes…Traceback (most recent call last):

File “./manage.py”, line 123, in

execute_from_command_line([sys.argv[0]] + django_args)

File “/openedx/venv/local/lib/python2.7/site-packages/django/core/management/init.py”, line 364, in execute_from_command_line

utility.execute()

File “/openedx/venv/local/lib/python2.7/site-packages/django/core/management/init.py”, line 356, in execute

self.fetch_command(subcommand).run_from_argv(self.argv)

File “/openedx/venv/local/lib/python2.7/site-packages/django/core/management/base.py”, line 283, in run_from_argv

self.execute(*args, **cmd_options)

File “/openedx/venv/local/lib/python2.7/site-packages/django/core/management/base.py”, line 330, in execute

output = self.handle(*args, **options)

File “/openedx/venv/local/lib/python2.7/site-packages/django/core/management/commands/migrate.py”, line 204, in handle

fake_initial=fake_initial,

File “/openedx/venv/local/lib/python2.7/site-packages/django/db/migrations/executor.py”, line 115, in migrate

state = self._migrate_all_forwards(state, plan, full_plan, fake=fake, fake_initial=fake_initial)

File “/openedx/venv/local/lib/python2.7/site-packages/django/db/migrations/executor.py”, line 145, in _migrate_all_forwards

state = self.apply_migration(state, migration, fake=fake, fake_initial=fake_initial)

File “/openedx/venv/local/lib/python2.7/site-packages/django/db/migrations/executor.py”, line 244, in apply_migration

state = migration.apply(state, schema_editor)

File “/openedx/venv/local/lib/python2.7/site-packages/django/db/migrations/migration.py”, line 126, in apply

operation.database_forwards(self.app_label, schema_editor, old_state, project_state)

File “/openedx/venv/local/lib/python2.7/site-packages/django/db/migrations/operations/special.py”, line 193, in database_forwards

self.code(from_state.apps, schema_editor)

File “/openedx/edx-platform/lms/djangoapps/certificates/migrations/0003_data__default_modes.py”, line 24, in forwards

File(open(settings.PROJECT_ROOT / ‘static’ / ‘images’ / ‘default-badges’ / file_name))

File “/openedx/venv/local/lib/python2.7/site-packages/django/db/models/fields/files.py”, line 94, in save

self.name = self.storage.save(name, content, max_length=self.field.max_length)

File “/openedx/venv/local/lib/python2.7/site-packages/django/core/files/storage.py”, line 54, in save

return self._save(name, content)

File “/openedx/venv/local/lib/python2.7/site-packages/storages/backends/s3boto.py”, line 409, in _save

key = self.bucket.get_key(encoded_name)

File “/openedx/venv/local/lib/python2.7/site-packages/boto/s3/bucket.py”, line 193, in get_key

key, resp = self._get_key_internal(key_name, headers, query_args_l)

File “/openedx/venv/local/lib/python2.7/site-packages/boto/s3/bucket.py”, line 200, in _get_key_internal

query_args=query_args)

File “/openedx/venv/local/lib/python2.7/site-packages/boto/s3/connection.py”, line 665, in make_request

retry_handler=retry_handler

File “/openedx/venv/local/lib/python2.7/site-packages/boto/connection.py”, line 1071, in make_request

retry_handler=retry_handler)

File “/openedx/venv/local/lib/python2.7/site-packages/boto/connection.py”, line 1030, in _mexe

raise ex

socket.gaierror: [Errno -2] Name or service not known

command terminated with exit code 1